From deep learning training to LLM inference, the NVIDIA H100

Tensor Core GPU

accelerates the most demanding AI workloads

Up to 30x improvement on LLM inference over the A100 on the

largest models

Up to 4x improvement on training over the A100

TensorDock partners with Voltage Park to offer thousands of H100s on-demand, ready to deploy!

Llama 7B inference speed using TensorRT-LLM in FP8

The H100 has the most memory bandwidth of any of our GPUs

On TensorDock's cloud platform. See below for more pricing details

The NVIDIA H100 is based on NVIDIA's latest GPU architecture,

hopper. The H100 is engineered to excel in tasks

involving deep learning, data analytics, and scientific

computing.

The H100 is fast. Its 528 fourth-generation Tensor Cores and

16896 CUDA Cores enable it to provide breakthrough

performance in AI model training and inference.

Apart from pure performance, its massive 80 GB of VRAM and

3.35 TB/s of memory bandwidth

make it ideal for large-scale LLM, data analytics, and

scientific

computing tasks that require large amounts of fast memory.

The H100 also

boasts advanced features like Multi-Instance GPU (MIG),

enabling it to

efficiently handle multiple workloads simultaneously — up to 7

10GiB VRAM instances per physical GPU.

Overall, the NVIDIA H100 represents a significant leap forward

in GPU technology, offering unmatched performance and

efficiency for the most demanding computing tasks.

See full data sheet.

A token is a word or subword, so the H100 can generate 19,694 words per second on the LLaMa 7B model.

CUDA cores are the basic processing units of NVIDIA GPUs. The more CUDA cores, the better.

Tensor cores are specialized processing units that are designed to efficiently execute matrix operations, used in deep learning.

With sparsity.

The more VRAM, the more data a GPU can store at once.

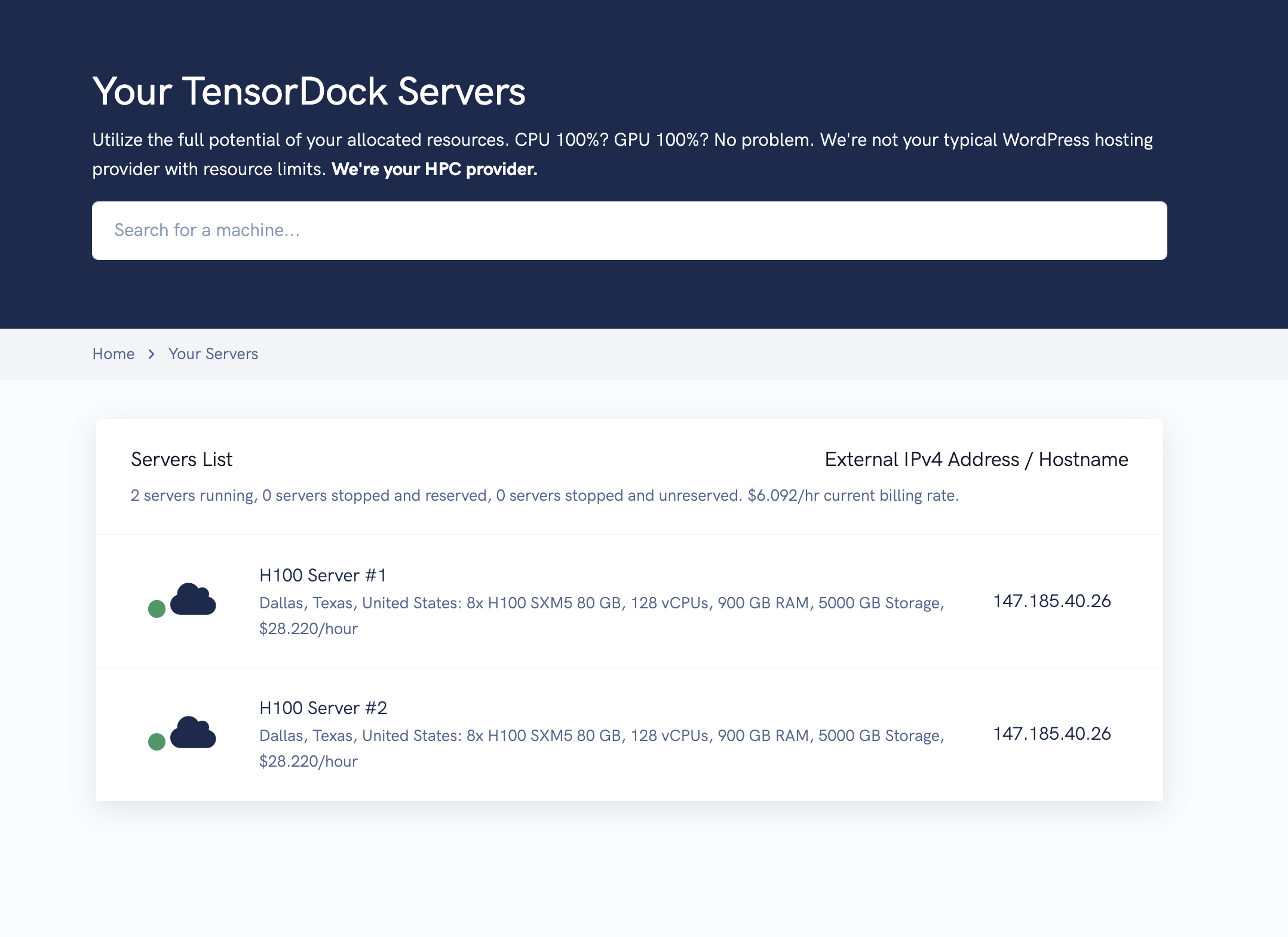

Unleash unparalleled computing power for on the industry's most cost-effective cloud

We have a select number of hostnodes that we offer on-demand. You can deploy 1-8 GPU H100 virtual machines fully on-demand starting at just $2.25/hour depending on CPU/RAM resources allocated, or $1.30/hour if deployed as a spot instance.

| Hourly | Spot Bid | Monthly | Annual | 3-Year | |

|---|---|---|---|---|---|

| Price per GPU, 8x minimum | $2.25/hr* | $1.91/hr** | $2.00/hr | $1.90/hr | $1.50/hr |

| Bare metal hostnode | N/A | N/A | $16.00/hr | $15.20/hr | $12.00/hr |

* On-demand pricing is billed a la carte; allocating additional

CPU/RAM/storage will increase the cost. Reserved instances come

with 1/8th of the advertised resources above, per GPU. Thus, an

8x configuration would include all

the available hostnode resources listed above. Additionally,

hosts are listed at staggered pricing from $1.90 to $2.50/hr.

Availability at $1.90/hr is not guaranteed.

** The minimum bid is the lowest price you can bid, but actual

pricing fluctuates based on market conditions.

We built our own hypervisor, our own load balancers, and our

own

orchestration engine — all so that we can deliver the best

performance.

VMs in 10 seconds, not 10 minutes. Instant stock validation.

Resource webhooks/callbacks. À la carte resource allocation

and

resizing.

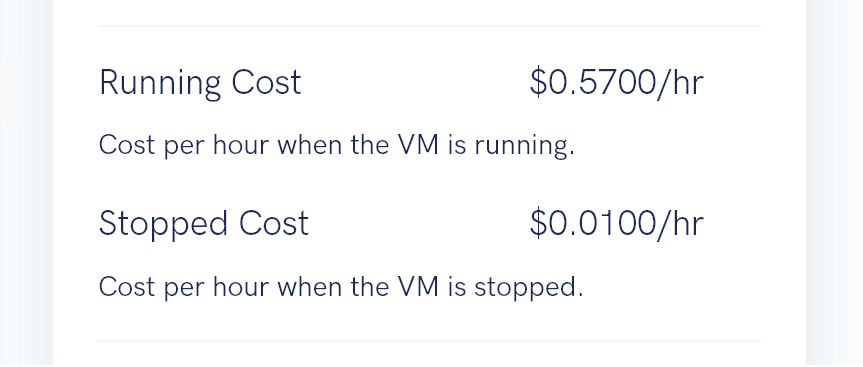

For on-demand servers, when you stop and unreserve your GPUs,

you are billed a lower rate for storage. You can always

request

an export of your VM's disk image.

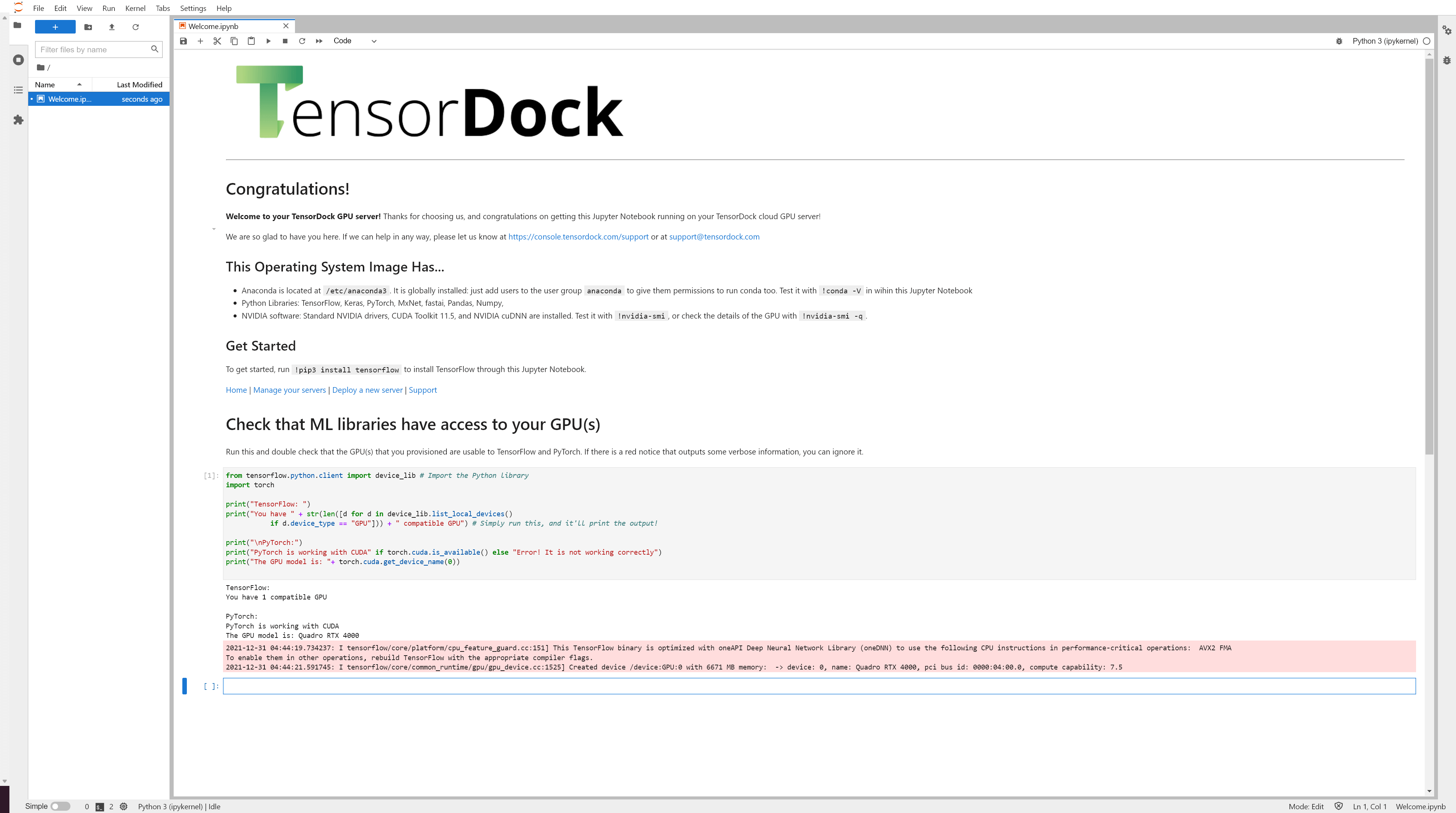

Deploy our machine learning image and get Jupyter Notebook/Lab out of the box. Slash your development setup times.

Our NVIDIA H100 clusters are supplied in Voltage Park's Dallas

data center with 24/7 security,

redundant power, multihomed network feeds, and 100% network

and

power uptime SLAs.

With SSAE-18 SOC2 Type II, CJIS, HIPAA, & PCI-DSS compliance options available,

you can trust that your most mission-critical workloads are in

a

safe place.

Hi! We're TensorDock, and we're building a radically more

efficient cloud.

Five years ago, we started hosting GPU servers in two basements

because we couldn't find a cloud suitable for our own AI

projects. Soon, we couldn't keep up with demand, so we built a

partner network to source supply.

Today, we operate a global GPU cloud with 27 GPU types located

in dozens of cities. Some are owned by us, and some are

owned by partners, but all are managed by us.

In addition to GPUs, we also offer CPU-only servers.

We speak in tokens and iterations; in IB and TLC/MLC, and

we're

excited to serve you.

GPUs

vCPUs

GB RAM

... all deployed within the past 24 hours

We currently offer H100 SXM5s in our secure Evoque

Dallas data center, protected by 24/7 security,

powered via redundant feeds, and backed by a 100% power

and network SLA.

Experience sub-40 millisecond latencies to nearly every US population

center for lightning fast LLM inference traffic.

Every layer of our infrastructure is protected by a

variety

of security measures, ensuring privacy and security for

our

customers.

Read more about our security.

We're thrilled to offer bare-metal virtualization for

customers looking to rent full 8x configurations for a

long

period of time.

For on-demand customers, we offer

KVM

virtualization with root access and a dedicated

GPU passed through. You get to use

the full compute power of your GPU without resource

contention.

For our on-demand platform, we operate on a pre-paid model: you deposit money and then provision a server. Once your balance nears $0, the server is automatically deleted. For these H100s, we are also earmarking a portion to be billed via long term contracts.

Go ahead — go build the future of tomorrow — on TensorDock. Cloud-based machine learning and rendering has never been easier and cheaper.

Deploy an H100 GPU Server