Enterprise-grade servers hosted in Tier 3/4 data centers

For builders needing training and inference with no compromises.

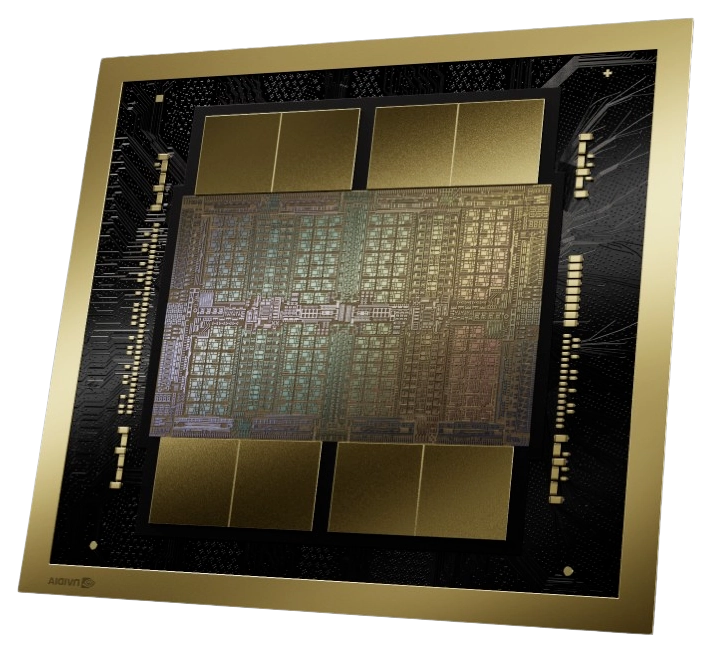

Deploy an H100 SXM

The best balance between price and performance for AI inference.

Deploy an A100 SXM

Truly unbeatable value for image processing, gaming, and rendering.

Deploy an RTX 4090No waitlists, quotas, or price gouging. Just the world's best price-to-performance.

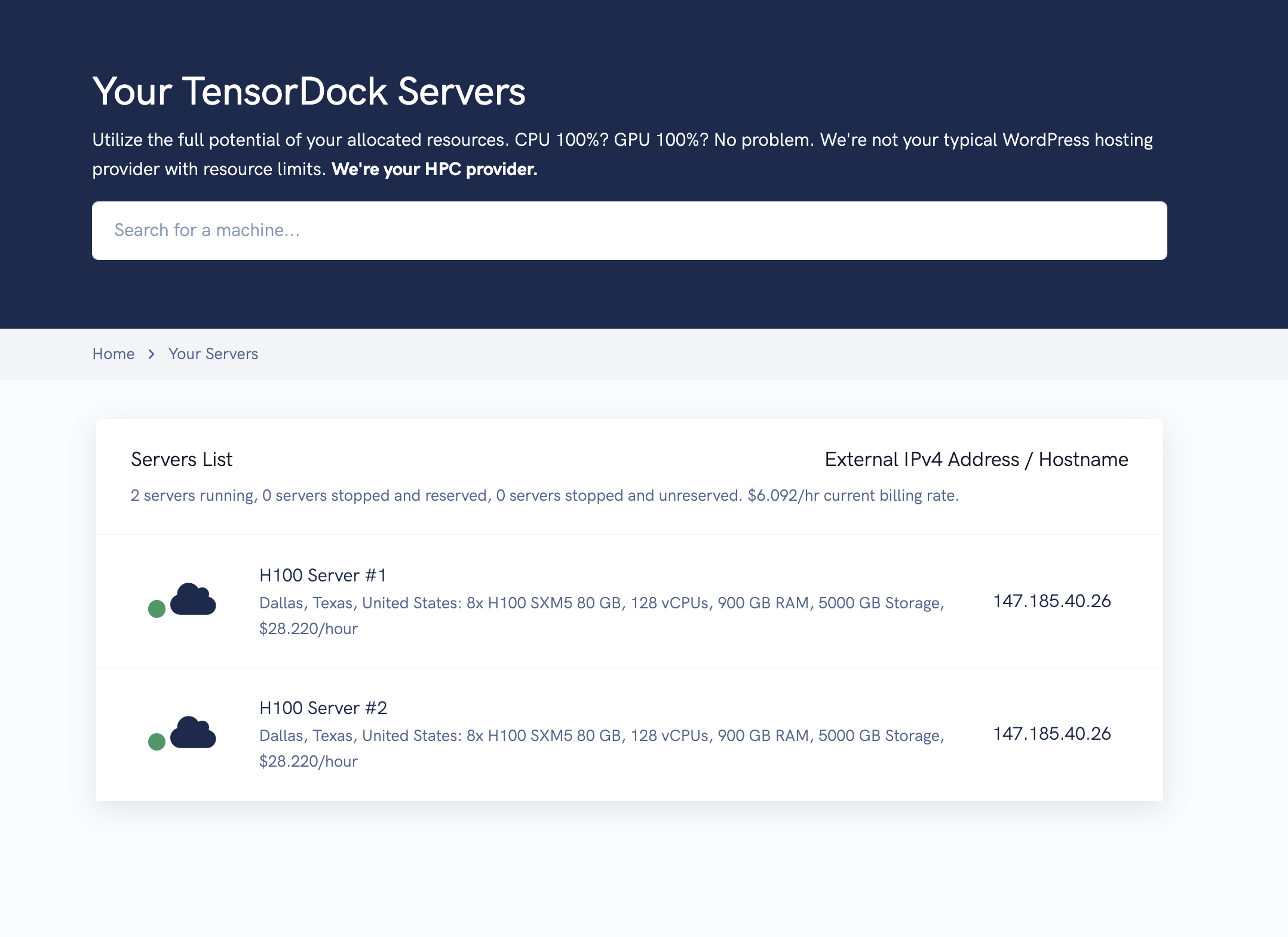

Register in just two clicks.

Start with as little as $5

Run your workload on our enterprise-grade hardware.

GPUs deployed

vCPUs deployed

of RAM deployed

...all in the past 24 hours

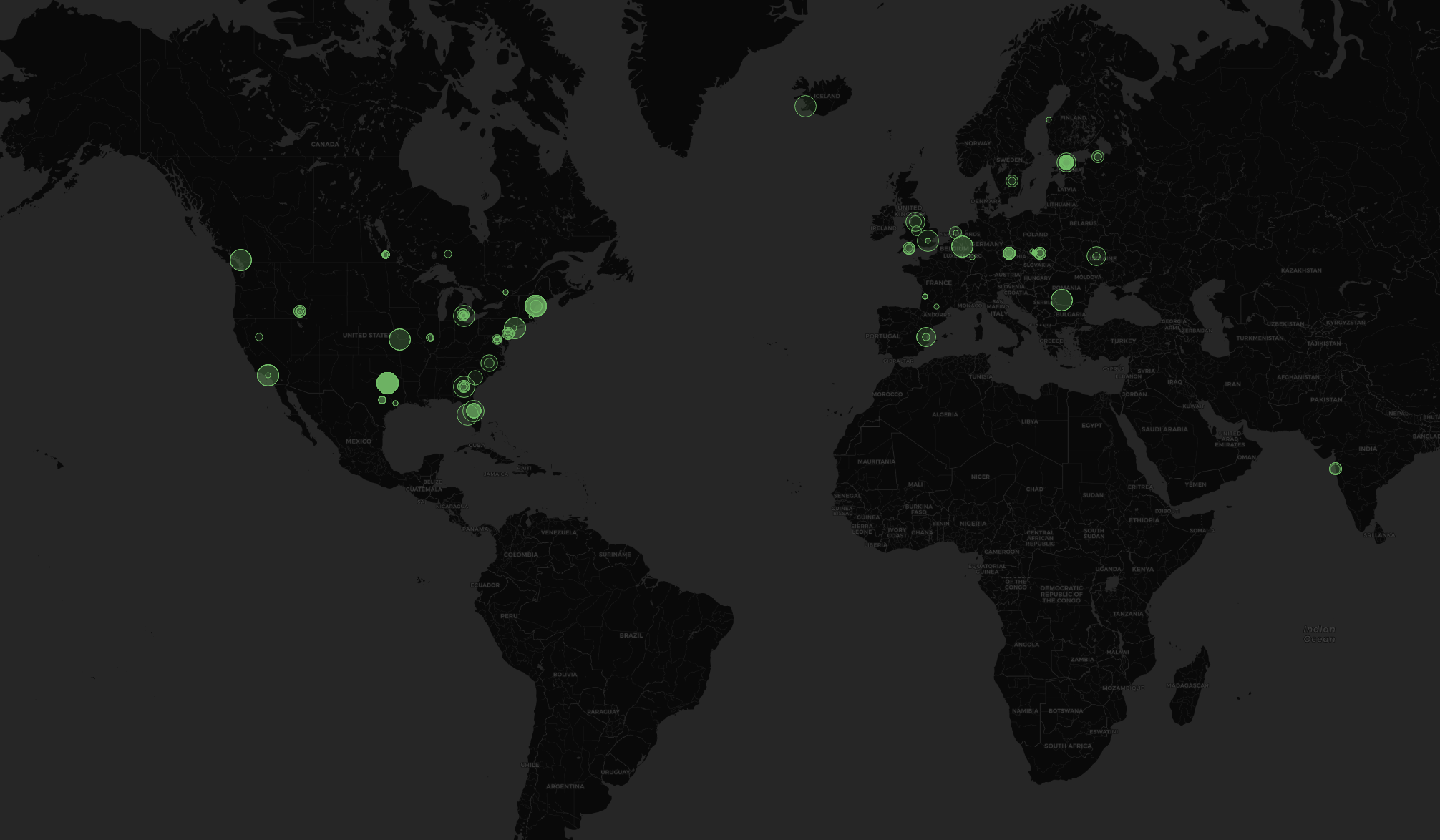

Up to 30,000 GPUs available through our close partners,

including 45 different models to fit any workload and budget.

Take advantage of consumer GPUs such as the RTX 4090 and 3090 for

up to 5x inference value.

Hundreds of GPUs ready to deploy on our dashboard at any time,

distributed across 100+ locations in over 20 countries.

Reach your customers where they are.

Scale now

Well-documented, well-maintained, well-everything.

Built from scratch, with metadata, availability, and everything you need

to manage your servers on TensorDock.

Try it out

All hosts are vetted by TensorDock for quality

hardware, technical knowledge, and communication skills,

before their GPUs are listed.

TensorDock holds hosts to a 99.99% uptime standard and

requires maintenance to be scheduled at least two weeks in advance.

Hosts who don't meet continue to meet our standards are removed

from our platform.

Learn more

A la carte billing - fully customize your CPU, RAM, and storage and pay only for what you need.

Save even more by committing to a long-term subscription. Contact us for details.| GPU model, resources not included | Typical hourly price, varies by host |

|---|---|

| H100 SXM5 80GB | $2.25 |

| A100 SXM4 80GB | $1.80 |

| A100 PCIe 80GB | $1.50 |

| V100 SXM2 16GB | $0.17 |

| L40 48GB | $0.95 |

| RTX 4090 24GB | $0.35 |

| RTX 3090 24GB | $0.20 |

| RTX 6000 Ada 48GB | $0.75 |

| RTX A6000 48GB | $0.45 |

| RTX A4000 16GB | $0.10 |

| Resource | Minimum, can vary | Price Per Hour, Billed Per-Second |

|---|---|---|

| Minimum 1 Gbps | Included | Included |

| Per vCPU (1 thread, 1/2 physical core) | 2 vCPUs ($0.006/hour) | $0.003 |

| Per GB of RAM | 4 GB ($0.002/hour) | $0.002 |

| Per GB of block NVMe SSD storage | 20 GB ($0.0010/hour) | $0.00005 |

Last Updated: July 24, 2024. Please check the dashboard for live pricing and availability.

For an 8x H100 server. Savings can be higher with mid-range enterprise GPUs

| Competitor | Price | TensorDock Price | Savings |

|---|---|---|---|

| AWS | $98.32 | $29.31 | 70% |

| Azure | $98.32 | $29.31 | 70% | Google Cloud | $88.49 | $29.31 | 67% |

| Paperspace | $47.60 | $29.31 | 38% |

| CoreWeave | $38.08 | $29.31 | 23% |

| RunPod | $31.92 | $29.31 | 8% |

Last Updated: July 24, 2024. TensorDock does not make any guarantees that information on this page is accurate. Confirm this is up to date with your own research.

Deploy Your GPU Server NowLet's chat! If you don't see what you need, we can work it out. We'll get back to you within 24 hours.

Book a meeting Message us

Most hardware on our platform is now hosted in certified data centers.

But as an additional layer of security, we revoke SSH access from

our hosts so they cannot access customer data without our knowledge.

TensorDock restricts hostnode

access to only those who need it. We have an agent monitoring

hostnodes for logins and suspicious activity, from any source.

Learn more.

We operate on a pay as you go model. Users deposit funds, and we deduct balance continuously after a server is deployed. When your balance reaches $0, your servers are automatically deleted. If you need to rent servers long-term, reach out to discuss our reserved pricing.

We're a marketplace of independent hosts who compete and set

their own pricing. As the customer, this ensures you

always have access to the market's best pricing.

Additionally, hosts have different locations and redundancy,

leading to varying costs. Our marketplace offers you options to

pay based on your preferences.

airgpu relies on TensorDock's API to deploy Windows virtual machines for cloud gamers. TensorDock's abundant GPU stock allows airgpu to scale during weekend peaks without worrying about compute availability.

ELBO uses TensorDock's reliable and secure GPU cloud to generate art. TensorDock's highly cost-effective servers run their workloads faster for less than the big clouds.

Professor Skyler Liang from Florida State University researches GAN networks with TensorDock GPUs. TensorDock's superior economics allow researchers to do more with their limited university budgets.

Creavite combines TensorDock's Windows VMs with Adobe software to render logo animations. TensorDock's CPU-only instances allow Creavite to fully integrate their workflows and stay on one cloud.

We connect customers to the best available compute. Cutting-edge hardware in Tier 3/4 Data Centers, for maximum security and reliability. Converted mining rigs, for maximum price-to-performance. We've got both, for whatever suits your needs best.

Our marketplace approach guarantees the best deal in the industry. Get the availability and location distribution of a hyperscaler, without the quotas or nonsense.

Available

In stock

Through partners